Archive

Golden NuGet!

For anyone using VS2010, one tool you need to try out is NuGet (actually pronounced new-get according to Microsoft, but what do they know?!).

What is it?

NuGet is Microsoft’s answer to the much-lamented gap in the .Net space for a package management tool like Ruby’s Gems or Linux’s RPM.

NuGet simply looks after the download and installation of tools and libraries you use in your projects. It will get the appropriate version of all needed DLLs, configuration files, and related tools, put them in a folder, and do any configuration if needed. Simple! Also useful is the fact that it will download any dependencies – so for example if we want to install Shouldly, which uses Rhino Mocks, NuGet will get the appropriate version of that as well.

And that’s about it. So it won’t change your world, but it will save you a good few hours each year hunting for, downloading, and installing tools.

History

NuGet started out as NuPack, which kind of took over from the Nu open source project. Indeed, NuGet is itself open source, continuing Microsoft’s trend of a more transparent and community-involving future, which is nice.

There are other package managers for .Net, including:

However, as usual, things don’t tend to take off in the .Net community until Microsoft makes their version, which then becomes king. I haven’t tried any of those package managers, but I wouldn’t want to bet on them being too successful now NuGet’s out.

Anyway, enough of the history lesson, how do I use this thing?!

Installation

Firstly, you need to get it installed, which is simple as it’s in the Visual Studio Extension Manager (Tools -> Extension Manager). Search the Online Gallery for ‘NuGet’ and you should find it:

A couple of clicks later and you’re ready to go!

Putting it to Use

There are two interfaces. You can either right-click on a project and select ‘Add Library Reference’, and find what you need with a handy visual interface (the same as the Extension Manager):

Or, by selecting Tools -> Library Package Manager -> Package Manager Console, you can use the Powershell-based console version, like a real developer!

(Check out some NuGet Console Commands, if that’s how you want to roll.)

Either way, you’ll end up with a new ‘packages’ folder in your solution, containing the bits you need (as well as having references automatically added to your project, and configuration set):

Et voilá! You’re ready to log/unit test/mock/whatever to your heart’s content.

You can also delete packages, update packages, and so on – it’s all pretty straightforward, so I’ll leave you to work that out.

So what are you waiting for? Go and nu-get it now!! (sorry..)

Nice up those Assertions with Shouldly!

One thing I noticed while having a play with Ruby was that the syntax for their testing tools is quite a lot more natural-language like and easier to read – here’s an example from an RSpec tutorial:

describe User do it "should be in any roles assigned to it" do user = User.new user.assign_role("assigned role") user.should be_in_role("assigned role") endit "should NOT be in any roles not assigned to it" do user.should_not be_in_role("unassigned role") end end

Aside from the test name being a string, you can see the actual assertions in the format ‘variable.should be_some_value’, which is kind of more readable than it might be in C#:

Assert.Contains(user, unassignedRoles);

or

Assert.That(unassignedRoles.Contains(user));

Admittedly, the second example is nicer to read – the trouble with that is that you’re checking a true/false result, so the feedback you get from NUnit isn’t great:

Test ‘Access_Tests.TestAddUser’ failed: Expected: True But was: False

Fortunately, there are a few tools coming out for .net now which address this situation in a bit more of a ruby-like way. The one I’ve been using recently is Shouldly, an open source project on GitHub.

Using Shouldly (which is basically just a wrapper around NUnit and Rhino Mocks), you can go wild with should-style assertions:

age.ShouldBe(3);

family.ShouldContain(“mum”);

greetingMessage.ShouldStartWith(“Sup y’all”);

And so on. Not bad, not bad, not sure if it’s worth learning another API for though. However, the real beauty of Shouldly is what you get when an assertion fails. Instead of an NUnit-style

Expected: 3

But was: 2

– which gives you a clue, but isn’t terribly helpful – you get:

age

should be 3

but was 2

See that small but important difference? The variable name is there in the failure message, which makes working out what went wrong a fair bit easier – particularly when a test fails that you haven’t seen for a while.

Even more useful is what you get with checking calls in Rhino Mocks. Instead of calling

fileMover.AssertWasCalled(fm => fm.MoveFile(“test.txt”, “processed\\test.txt”));

and getting a rather ugly and unhelpful

Rhino.Mocks.Exceptions.ExpectationViolationException : IFileMover.MoveFile(“test.txt”, “processed\test.txt”); Expected #1, Actual #0.

With Shouldly, you call

fileMover.ShouldHaveBeenCalled(fm => fm.MoveFile(“test.txt”, “processed\\test.txt”));

and end up with

*Expecting*

MoveFile(“test.txt”, “processed\test.txt”)

*Recorded*

0: MoveFile(“test1.txt”, “unprocessed\test1.txt”)

1: MoveFile(“test2.txt”, “unprocessed\test2.txt”)

As you can see, it’s not only much more obvious what’s happening, but you actually get a list of all of the calls that were made on the mock object, including parameters! That’s about half my unit test debugging gone right there. Sweet!

Shouldly isn’t a 1.0 yet, and still has some missing functionality, but I’d already find it hard to work without it. And it’s open source, so get yourself on GitHub, fork a branch, and see if you can make my life even easier!

Snoop for WPF

A must-have tool for WPF development

Anyone who’s been working with WPF for any length of time will probably already know about this, but for anyone else, one of the tools you have to check out is Snoop, originally posted by Pete Blois and now hosted on CodePlex.

Snoop is basically a debugging aid which allows you to see exactly what’s happening in your running WPF app. This can be very handy, as XAML can get a bit confusing after more than a couple of buttons, and there are also problems like binding errors (when you miss-spell a property name in your XAML) not making themselves apparent.

Snoop runs against your running application, so if you do anything fancy with dynamic control generation, it will be included. One of the more useful aspects is the ability to see everything in the visual tree:

Aside from all of the properties of the selected control being shown, you might also notice the selected UserGrid being displayed, which is handy – Snoop also cleverly highlights the selected visual component on your running app:

You can also use this to show any controls which have binding errors (a common issue in WFP if ur spellin is bad like wat mine is):

As well as those genuinely useful features, there are a couple of really cool things which are good for showing off – the first is the ability to magnify your app (so you can prove WPF really is using vector graphics!):

Check out them corners.. Sweet!

And last but not least, watch in amazement as Snoop explodes your app into a magnificent 3D masterpiece!

And that’s not just a static image either, you can spin it around and all sorts. Now come on, who’s not going to be impressed by that?!

So, to summarise, Snoop for WPF – if you haven’t got it, go get it now!

Visual Studio Code Snippets

Handy for Unit Tests – and Much More!

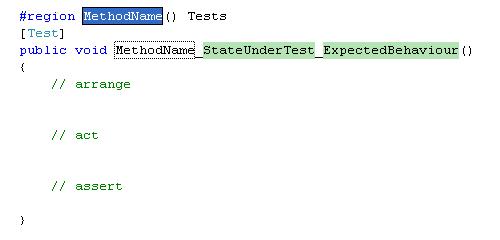

I recently found myself having to type some code in repeatedly while unit testing. This sort of thing:

Typical Unit Test Layout

You can see here that I’ve been using Roy Osherove’s naming convention, which I like a lot. I’m also using the AAA (arrange-act-assert) layout for the test code, which I like to comment for increased readability. Although I’ve done something similar many times, I must have seen something about Code Snippets recently as I thought it might be a good idea to give them a try.

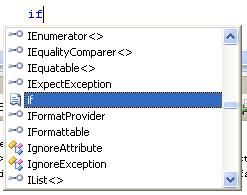

Code snippets are a part of Visual Studio (2008 certainly, not sure about before that). They are little code templates that you can get via intellisense, for example when you type ‘if’:

If Snippet in Intellisense

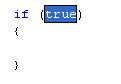

and then hit ‘Tab’ twice, you get the ‘If template’, with the section highlighted ready for you to put your condition in:

If Template

As it turns out, Vis Studio doesn’t ship with a snippet editor, but there is a very good open source one on CodePlexwhich is linked to from MSDN. Using this, I can put in my unit test template, and define the ‘tokens’ that I want to replace (in this case, ‘method name’, ‘state under test’ & ‘expected behaviour’):

Unit Test in Snippet Editor

I’ve also put in the $selected$$end$ bit, which defines where the cursor goes when you’re done. You can see in this one that I’ve put in a #region for the method name as well – the snipped editor’s smart enough to realise this is the same as the one in the method name. Here I’ve defined the shortcut as ‘ter’ (for ‘Test with Region’), because I don’t like typing.

So save that, and Bob’s your uncle! Go back into Visual Studio, type ‘ter’ (tab-tab), and as if by magic, you very own unit test template appears, awaiting your input!

Unit Test Template

It’s the type of thing you wish you’d found out about years ago, and I’m sure over the next few days I’ll make about a hundred of these things for increasingly pointless bits of code. But it’s another productivity increase, and they all add up, so if I find a few more of these I’ll be able to write a day’s worth of code in about two keystrokes!

Now I wonder what else Visual Studio does that I don’t know about..

Code Coverage with PartCover

I’ve been getting more into Test Driven Development (TDD) recently, so I’ve been building up my unit testing toolset as I go along. I’ve got the basics covered – the industry-standard NUnit as my testing framework & test runner, and Castle Windsor if I need a bit of IOC, are the main things.

But I’m at the stage now where I know the basics so I’m trying to improve my code and my tests, as because anyone in TDD will tell you, if your tests are no good, they may as well not be there. So the next obvious step was a code coverage tool. At the basic end, this is a tool that you use to find out how much of your application code actually gets tested by your unit tests, which can be a useful indicator of where you need more tests – although it doesn’t say whether your tests are any good or not, even if you have 100% coverage.

Open Source Tools

Being Scottish by blood, I’m a bit of a skinflint, so like most of my other tools I wanted something open source, or at least free. This actually proved more difficult than I first imagined – most of the code coverage tools seem to be commercial, often part of a larger package. Some also required that you alter your code to use them, by adding attributes or method calls – definitely not something I wanted to do. The main suitable one I came across was PartCover, an open source project hosted on Source Forge. Thankfully, it was easy to install and set up, and did everything I wanted.

Using PartCover

There are two ways of running PartCover – a console application, which you would use with your build process to generate coverage information as XML, and a graphical browser, which lets you run the tool as you like and browse the results. I should point out I haven’t tried the console part, or using the generated XML reports, which you would probably want to do in a larger-scale development environment along with Continuous Integration etc.

Either way, the first thing you have to do is configure the tool – you need to define what executable it will run, any arguments, and any rules you want to define about what assemblies & classes to check (or not):

PartCover Settings

You can see here that I’m running NUnit console – you don’t actually have to use this with unit testing. If you want, you can just get it to fire up your application, use your app manually, and PartCover will tell you what code was run – which I imagine could come in quite handy in itself in certain situations. But as I want to analyse my unit test coverage, I get it to run NUnit, and pass in my previously created NUnit project as an argument.

Rules

I also have some rules set up. You can decide to include and exclude any combination of assemblies and classes using a certain format with wildcards – here I’ve included anything in the ‘ContactsClient’ and ‘ContactsDomain’ assemblies, apart from any classes ending in ‘Tests’ and the ‘ContactsClient.Properties’ class. It can be useful to exclude things like the UI if you’re not testing that, or maybe some generated code that you can’t test – although you shouldn’t use this to sweep things under the carpet that you just don’t want to face!

Analysis

With that done, just click ‘Start’ and you’re away – NUnit console should spring into life, run all of your tests, and you’ll be presented with the results:

PartCover Browser

As you can see, you get a tree-view style display containing the results of all your assemblies, classes and methods, colour coded as a warning. But that’s not all! Select ‘View Coverage details’ as I’ve done here, and you can actually see the lines of code which have been run, and those which have been missed. In my example above, I’ve tested the normal path through the switch statement, but neglected the error conditions – it’s exactly this type of thing that code coverage tools help you to identify, thus enabling you to improve your tests and your code.

At this point, I feel I have to point out a potential issue:

Warning! Trying to get 100% test coverage can be addictive!

If you’re anything like me, you may well find yourself spending many an hour trying to chase that elusive last few percent. This may or may not be a problem depending on your point of view – some people are in favour of aiming for 100% coverage, but some think it’s a waste of time. I like the point of view from Roy Osherove’s Book, that you should test anything with logic in it – which doesn’t include simple getters/setters, etc.

But 100’s such a nice, round number, and I only need a couple more tests to get there..